MapReduce Projects for Research Scholars.

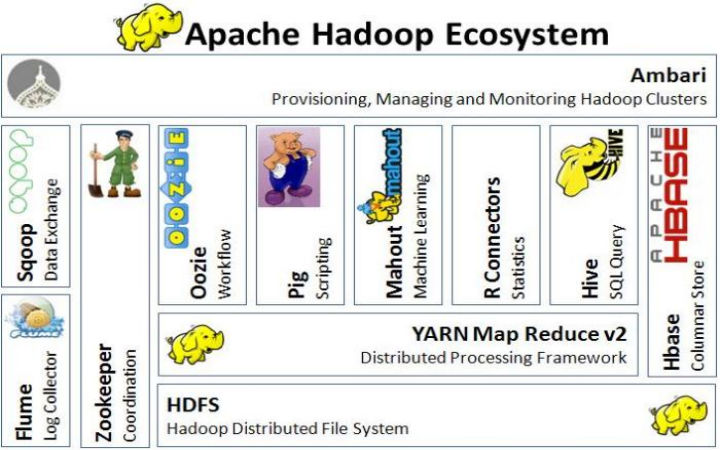

MapReduce projects provide scheduling algorithms and efficient storage related to big data processing and data mining application. MapReduce projects achieve high reliability, scalability and work load of processors to be reduced. They do not possess knowledge of parallel and distributed systems. MapReduce projects in recent days are carried out by most of computer science and information technology engineering students. All process in a distributed manner is executed based on MapReduce concepts for large scale data processing application. MapReduce is a programming modl which will parallelize small-to-large scale business environments. MapReduce projects are developed with combination of hadoop environment and perform splitting & storage. Functions of MapReduce Projects are Map () and Reduce (). MapReduce Projects PDF

Execution framework of MapReduce Projects.

In sequential order process could be executed in MapReduce application like code for executing map and reduce function, entering a job into MapReduce framework, fault tolerance and synchronizing data.

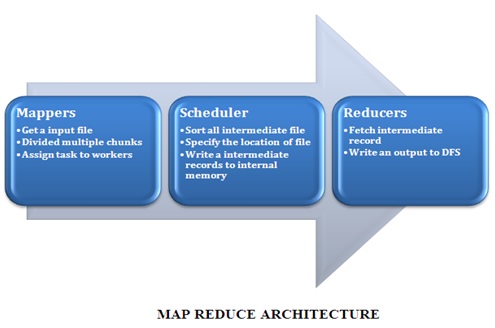

Mappers and reducers: To multiple smaller units each job is divided. To workers node units of jobs are given.

Components of map reduce phase:Intermediate files are generated by worker nodes and on local disk it is written. By using scheduling algorithm intermediate files are stored. From internal memory input can be fetched by the reducer. To distributed file system the output files are written.

- Multiple worker nodes.

- One server node.

Code execution for mappers and reducers: Distributed computing framework is achieved by map reduce framework that contains scheduling algorithm. In mapper’s node data locality could be identified easily as it is provided by scheduling algorithm.

Checking Fault Tolerance in MapReduce Projects.

To have a check over workers node hello messages are send by master node periodically it there is no respond then it is marked as failure node for improving map reduce application map reduce components are divided to sub components.

MapReduce Projects Framework.

- Mapper.

- Combiner.

- Partition-er.

- Shuffle & Shot.

- Reducer.

Components of MapReduce Projects.

The two components MapReduce Projects are

- Task tracker.

- Job tracker.

Task tracker: Reduce phase function. Instruct the map.

Job tracker: Commands task tracker activities. Key is used by mappers and reducer’s value pair from data structure in map reduces.

Values and keys may be String, Floating point and Integer. The key and values structure of map reduce scheduling algorithm is used for collecting HTML contents and web page URL. Simple word count algorithm are contained under MAP reduce that counts the occurrence number of each word in an input text.

How to Map and Reduce in MapReduce Projects?

Functional programming languages are used in MapReduce for doing map and reduce functions. Map and reduce phases are combined in MapReduce framework for processing huge amount of data.

Map reduce applications uses the following functional programming language:

Data’s are processed by using:

- Mappers.

- Reducers.

- Sorting algorithm.

As an input big data could be got in Map reduce frame work.Two components for executing a process are contained under map reduce framework model in a parallel manner.

Implementing MapReduce Projects

Hadoop map reduce implementation varies from Google map reduce implementation. Programmers are allowed to specify key values in reducer phase code that is allowed in Google implementation.

By use of key the reducer was constructed and values are generated by that particular key in Hadoop implementation. In reducer phase, keys are not allowed to change for Google map reduces. But in case of Hadoop implementation, there is no restriction.

MapReduce Algorithms.

- BFS.

- Sorting.

- Searching.

- TF-IDF.

- Page Rank.

MapReduce projects recent advancements are

- Overcome real time problem in data mining.

- Satisfy end user expectation.

- A stream of data to be supported.

Features of MapReduce Projects is Parallelization.

Applications of MapReduce Projects.

- Machine Learning.

- ETL.

- Data Validation.

- Performance Testing.

- Log Analysis.

- Data Querying.

MapReduce project processes are

- Dache: Data ware cache.

- Data centric Scheduling.

- Data Exchange Policy.

- Resource Provisioning.

- Hadoop.

- Hybrid Clouds.

They provide more innovative projects based on our interested domain. Technical assistance provides great support for my projects.

Team members can solve any issues and provide efficient solution. Their project implementation is a unique methodology than other centers. 100 % satisfaction and on time delivery.

Team members are highly experienced and provide easy way to select project for students. They update and explain about projects process by regular classes.

They are the best trainers for final year projects.